With TJ Byers

Pardon My Impedance

Question:

How is the internal resistance of a power supply measured and kept at a low value? How important is this?

Richard H. Abeles

Answer:

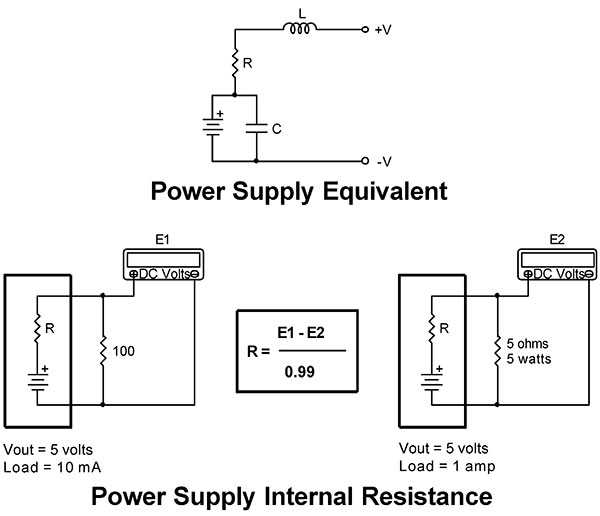

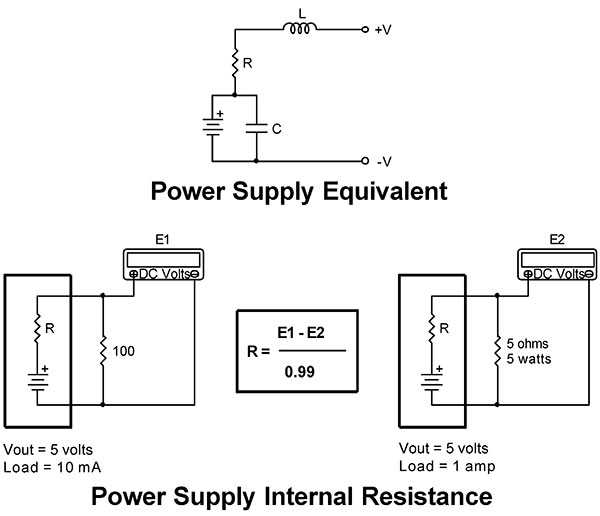

The internal resistance of a power supply has a lot of influence on the output voltage. A typical power supply contains resistance, capacitance, and inductance. The internal resistance is mostly within the wiring of the unit, and while the regulator can react to voltage changes in its immediate vicinity, it can’t compensate for voltage drop in the output wires or panel connectors.

All you need to measure the internal resistance of any power source — including batteries — is a DVM and two resistors. The resistors are selected so that they measure the range of the power supply from minimum to maximum. For this example, the values are 10 mA and one amp, respectively. By subtracting E2 from E1, you get the voltage drop across R — the internal resistance. Using Ohm’s Law, the value of R is equal to E1 - E2 / I2 - I1. Let’s say the difference is 0.2 volts. Plugging the values in, we get an internal resistance of 200 milliohms. While this may not seem like a lot, it adds up as the output current increases. At five amps, 200 milliohms will consume one volt.

Like I said, this methodology can also be used to test dry cell batteries — even rechargeable. In fact, this is a method often used to measure the amount of charge left in a NiCd or NiMH battery. As the charge decreases, the internal resistance increases. By keeping tabs on the internal resistance, you’re able to interpolate the amount of charge left.

Comments