With TJ Byers

PWM Fine Tuning

Question:

I want to control the speed of a DC brush motor (rated 12 volts at one amp) using PWM with an FET as the power driver. At the present time, I plan to use a 1-kHz signal with a 30% to 60% duty cycle (300 uS-600 uS with a 1 mS period) to control the motor's speed. My question: How do I determine the optimum frequency of the PWM? Would it be better to operate the PWM at 3 kHz? And, if so, why would it be better: efficiency, power dissipation, power consumption?

Anonymous

via Internet

Answer:

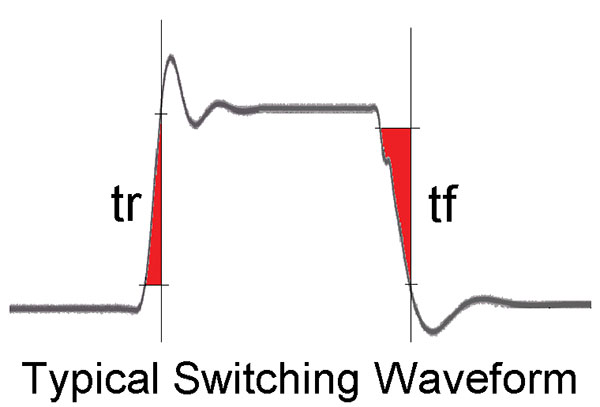

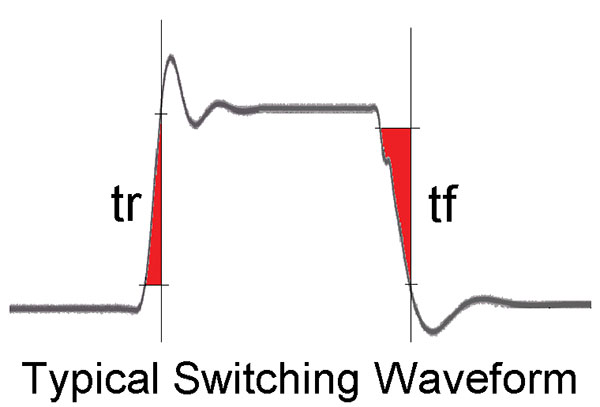

The ideal PWM controller combines high-speed power MOSFETs with low-switching frequencies to achieve extremely high efficiency in a very small package. The reason for this seemingly contradictory arrangement is to minimize the amount of time the power transistor spends in the active region. When a MOSFET is switched from off to on, the transistor doesn't respond instantly. It takes time to make the transition, during which the transistor wastes power and dissipates heat. This region is highlighted below in red.

The less time spent in the regions of tr and tf (the red areas), the more efficient the controller. These times are a function of the MOSFET itself, and are specified in nanoseconds on the datasheet. As for the slower repetition rate, the fewer times the MOSFET has to make these transitions, the fewer watts wasted as heat and the more efficient the controller. Of course, the repetition rate has to be high enough that the motor response time doesn't mimic a battleship in a U-turn.

That said, what is an optimal PWM frequency? It depends on the motor, the starting load, and a lot more. Most commercial PWM controllers for DC motors, of about 1/10 HP and thereabouts, run between 5 kHz and 15 kHz.

Comments